Michele's blog

Advancing the theory of gravitational-wave detection with pulsar-timing arrays

2014/07/24

My postdoc Rutger van Haasteren and I recently completed two new papers that (we believe) represent significant steps forward in the quest to implement gravitational-wave searches that can ingest large pulsar-timing-array datasets, such as those assembled by the International Pulsar-Timing Array.

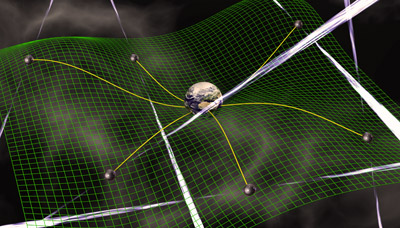

(As a reminder, the idea behind a pulsar-timing array is that of using Galactic millisecond pulsars as nearly perfect clocks, looking for gravitational waves as deviations in the strict periodicity of the pulse times of arrival. More here.)

In our first paper, the result of almost two years of work, Rutger and I survey, refine, and extend the emerging formalism that models timing noise and errors as Gaussian processes. In gravitational-wave searches in pulsar-timing data, we need to be able to distinguish between different kinds of superimposed, stochastic signals: the noise from all the complicated physics that happens in and around the neutron star, and in the propagation of the pulses out to Earth; the errors due to modeling and fitting imperfectly the deterministic temporal structure of the times of arrival; and the contributions due to gravitational waves (which, unlike the others, will be the same for all pulsars). Gaussian processes allow us to put this distinction on a sound statistical basis.

In the same paper we also figure out a novel and much more efficient way to sample the posterior distribution of the noise parameters (in Monte Carlo fashion), making it possible to run a full noise analysis (e.g., a study that does not assume the parameters of the noise, but rather infers them from the data) on large datasets (decade long, with tens of pulsars). I cannot expatiate on this without getting far more technical (see the paper itself if you're curious); suffice it to say that our solution is a creative adaptation of the idea of Gibbs sampling.

In our second paper, the fruit of two weeks of sudden inspiration and feverish work, Rutger and I describe an accurate and efficient way to speed up a crucial mathematical operation that is required at every step in Gaussian-process-based searches, and that indeed dominates their computational cost. This operation is (effectively) the inversion of a large dense matrix, with size n by n, corresponding to the number of points in a dataset (i.e., many thousands). The cost of such an inversion is proportional to the cube of n: too much.

What we do instead (again, effectively), is to compute an approximate inverse, the cost of which is proportional to the cube of the effective number of degrees of freedom of the problem. Armed with this optimization, and with the fast sampling scheme described above, large-dataset searches become feasible in hours on a notebook computer, rather than in days on a supercomputing cluster.

(More about all this here.)

© M. Vallisneri 2014 — last modified on 2014/07/24